One Bad Data Point Can Break The Entire AI Stack For Streaming Publishers

AI agents are quickly becoming the new operating layer for media companies.

One system pulls viewing data.

Another analyzes engagement.

Another generates insights.

Another writes a report or recommendation.

In theory, the entire process becomes an automated intelligence pipeline: an “agentic” stack that continuously analyzes audience behavior and recommends strategic decisions.

But there’s a hidden risk in this architecture.

One bad data point can poison the entire system.

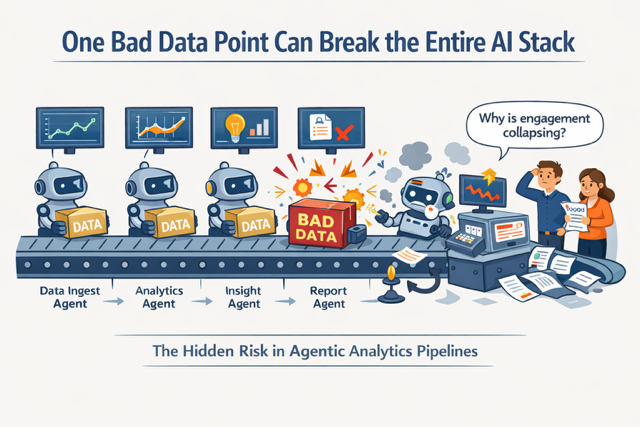

The Agentic Assembly Line

Media companies already operate complex data pipelines. Streaming platforms in particular rely on a wide range of systems to understand what audiences are doing.

A typical analytics workflow might pull from:

• your streaming app’s viewing telemetry

• an observability system tracking buffering and playback performance

• ad delivery logs from your ad server

• marketing campaign data from acquisition platforms

• social media engagement data from distribution channels

Individually, each system captures a different piece of the audience story.

Increasingly, AI tools promise to stitch those signals together into automated insights about audience behavior, ad performance, or content strategy.

When it works, it looks like magic.

But structurally, this kind of system behaves less like an intelligent assistant and more like an assembly line.

Each stage depends on the quality of the data that comes before it.

And if something goes wrong early in the chain, the error can quietly propagate through the entire workflow.

The Poison Pill Problem

Imagine a simple scenario.

Your analytics pipeline detects a drop in viewing minutes for a new show.

An AI agent flags it as a potential engagement issue.

Another agent analyzes audience trends and suggests viewers are losing interest.

Another recommends adjusting promotion strategy.

Another generates a report explaining why engagement is declining.

Except the problem wasn’t audience behavior at all.

It was a telemetry delay in the observability system tracking playback metrics.

The viewing minutes were incomplete.

By the time the insight reached decision-makers, a temporary data glitch had already turned into a strategic narrative.

One bad input poisoned the entire chain.

Platform Data Is Always Changing

This problem isn’t unique to streaming telemetry.

Most media analytics systems also depend heavily on data from external platforms.

Audience engagement might come from YouTube, TikTok, Instagram, or Meta.

These platforms expose data through APIs that appear stable on the surface.

But anyone who has worked with them long enough knows they’re constantly evolving.

Metrics change definitions.

Endpoints get deprecated.

Permissions shift.

Rate limits interrupt requests.

Fields appear or disappear.

Sometimes the APIs simply return incomplete data.

None of this is unusual. It’s simply how platform ecosystems evolve.

But when AI automation sits on top of those systems, even small inconsistencies can cascade through the pipeline.

The Automation Paradox

Ironically, the more automated these systems become, the harder it is to detect problems.

When humans build reports manually, they often notice anomalies. A number looks strange. A trend doesn’t match expectations. Someone double-checks the data.

But in an automated workflow, that sanity check can disappear.

AI agents ingest the data, process it, interpret it, and produce insights—all without questioning whether the underlying numbers were reliable in the first place.

The result is a system that appears sophisticated while quietly amplifying bad inputs.

This Isn’t Really an AI Problem

It’s a measurement problem.

Media companies have always struggled with fragmented data environments.

Streaming telemetry, ad logs, marketing attribution, and social performance all live in different systems with different definitions and collection methods.

Building reliable analytics pipelines has always required more than simply connecting APIs.

It requires:

• normalization across platforms

• monitoring for schema changes

• validation for incomplete data

• reconciliation across multiple sources

• constant infrastructure maintenance

Without those layers, automated insights can drift away from reality.

The Infrastructure Layer Underneath AI

At Mondo Metrics, we think about this challenge as a measurement infrastructure problem.

AI agents can absolutely accelerate analysis and reporting. But they still depend on the reliability of the data flowing through the system.

If the inputs aren’t validated, normalized, and monitored, automation simply makes mistakes happen faster.

Which means the real opportunity in AI-powered media analytics may not be building more agents.

It may be building the infrastructure that ensures those agents are working with trustworthy data in the first place.

The Question Media Companies Should Ask

AI will undoubtedly become a central part of the media analytics stack.

But before fully automating decision-making, companies should ask a simple question:

Who is responsible for validating the data feeding the AI?

Because in an agentic system, one bad data point isn’t just a minor glitch.

It can become the poison pill that wrecks the entire assembly line.